Sample size is simply the number of people whose responses you’ll collect in your survey. Instead of asking everyone in your target population (which is often impossible), you take a sample and use it to make conclusions about the larger group.

For example:

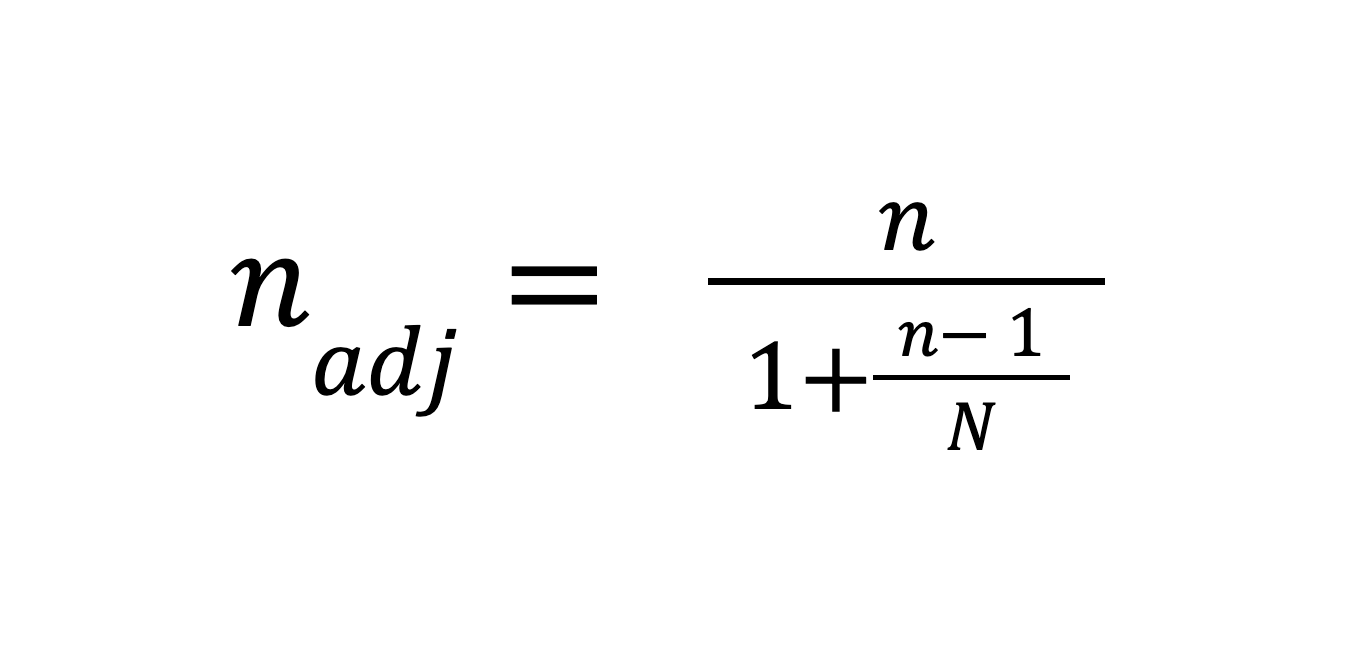

The most widely used formula is:

Where:

Confidence level (Z-score): How certain you want to be that your results reflect reality.

Margin of error (E): The “wiggle room” in your results. For example, ±5% means if 60% of respondents say “yes,” the true number could be between 55% and 65%. Smaller errors require larger sample sizes.

Estimated proportion (p): The share of your population expected to give a certain response. If you’re unsure, use 0.5 (50%), which is the most conservative estimate and ensures your sample size isn’t underestimated.

Population size (N): The total number of people you could potentially survey. For very large populations (10,000+), the formula above works fine. But for smaller groups, you’ll need a correction:

Example 1: Large population

So, you’d need about 384 responses whether your audience is 20,000 or 2 million.

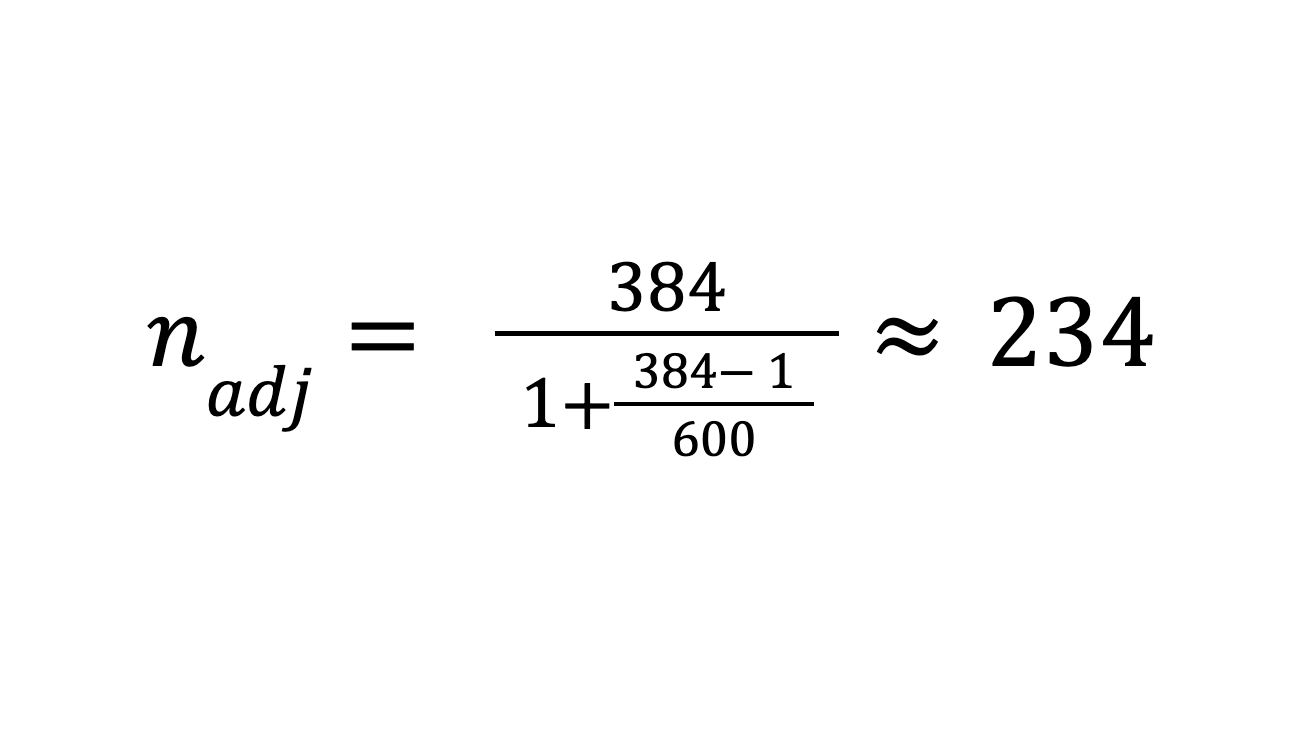

Example 2: Small population

Same inputs, but your customer base (N) is only 600.

Here, you’d only need about 234 responses for reliable results.

Sample size expectations differ depending on whether you’re collecting quantitative or qualitative data.

In quantitative research, the goal is statistical accuracy and generalizability. That means you usually need a larger sample size so your numbers reliably represent the wider population. For example, if you want to estimate what percentage of your customers prefer Feature A over Feature B, you might need a few hundred responses to reach a reasonable margin of error.

In qualitative research, the focus is on depth of understanding rather than broad representation. Here, smaller sample sizes are completely acceptable because the value comes from rich, detailed insights rather than statistics. For instance, interviewing 15–30 participants can be enough to uncover recurring themes, motivations, or pain points that you wouldn’t spot in a large-scale survey.

The key difference is that quantitative studies emphasize breadth, while qualitative studies emphasize depth. In practice, many teams use both approaches together—starting with qualitative interviews to explore ideas and then running a larger quantitative survey to confirm how widespread those ideas are across their audience.

Before worrying about numbers, it’s worth stepping back and asking: who exactly do you want to hear from? A clear definition of your population ensures your results actually reflect the group you care about.

Think beyond broad categories like “online shoppers.” Instead, narrow in on the specifics—say, US women aged 25–40 who buy beauty products monthly. You can also break it down by traits that really matter to your goals: geography, age, business size, or something else unique to your audience. The sharper the focus, the more reliable the insights.

Not every study needs laser-level accuracy. Sometimes a quick gut check is enough, while other times you’ll want the kind of precision you can bet big decisions on.

Confidence level: Most business research uses 95%. For early-stage tests, 80–90% might do, while high-stakes fields like medicine often go as high as 99%.

Margin of error: A ±5% margin usually works well for business insights. If accuracy really matters, you may need ±2–3%—just remember, the smaller the error, the larger the sample.

Sample size ranges: As a rule of thumb, aim for at least 100 responses if you want directionally useful results. If your total population is small (say fewer than 100 people), it’s often best to just reach out to everyone.

On paper, your sample size may look perfect. In reality, people get busy, ignore invitations, or drop out halfway. That’s normal—you just need to plan for it. For example, If you expect only about 40% of people to respond, consider inviting 2.5× more participants than your target number.

And if you’re working with hard-to-reach or niche audiences, don’t be discouraged—sometimes even 15–30 responses from early adopters of your product can give you valuable, directional insights to guide decisions.

How you structure your study can also affect the sample size you’ll need. For example, if you’re surveying clusters of people who are likely to answer similarly—like employees at the same company—you may need to increase your sample to reduce bias. On the other hand, if your population naturally splits into subgroups (by age, location, or role), using a stratified sample ensures each group is represented proportionally, which helps keep your results balanced.

For most everyday surveys—like customer feedback, employee engagement, or product research—simple calculators with the best practices above are more than enough. They give you a solid sample size quickly and practically.

But if your survey has higher stakes, stricter accuracy requirements, or a complex design, you may need more rigorous methods to determine sample size. Here are the most common advanced approaches:

A statistical method used to estimate how many responses (sample size) you need to reliably detect a real effect—such as a difference between groups or a relationship between variables—if it actually exists.

When to use it:

Key inputs:

Example: Suppose you run an A/B test and want to detect at least a 5% lift in conversion rate, with 80% power and a 5% alpha level. A power analysis might show you need around 800 responses per group to be confident the observed lift isn’t due to random chance.

You create artificial datasets that mimic your real-world situation and “simulate” running the study many times to see what sample size consistently gives reliable results.

When to use: When data or study designs are too complex for neat formulas — e.g., skewed data, clustered data, or non-standard models.

Example: Say you’re measuring time spent on a website, but the distribution is highly skewed (some people stay for seconds, some for hours). Instead of relying on a formula that assumes a normal distribution, you simulate the study 1,000 times using realistic assumptions. You might find that a sample of 300 users consistently gives you stable estimates of average time on site.

Practical “rules of thumb” tailored to specific study designs — especially common in education, sociology, or psychology.

When to use it:

Example: If you’re studying employee satisfaction across companies, it’s not enough to just survey 1,000 people from a single company. You’d want responses spread across many companies, with enough employees per company to capture variation within groups. A common approach is to aim for a reasonable number of groups (e.g., 20–30) with enough people in each group (e.g., 20–30 per group) so that both group-level and individual-level insights are reliable.

Official or widely accepted guidelines that dictate minimum sample sizes or statistical requirements in specific fields.

When to use it:

Example: In clinical trials, regulators like the FDA may require studies to have at least 80% power and a 5% alpha level. They may also set minimum sample sizes for safety or efficacy studies, depending on the condition and population.

Once you’ve thought through the numbers, the next step is putting your survey into action. That’s where a tool like QuestionScout comes in handy.

You can design a professional survey in just a few minutes, with control over themes, fonts, and colors so it matches your brand. Features like skip logic and branching help keep surveys concise and relevant, guiding respondents through different paths based on their answers.

You can share your survey through a direct link, email it to your target audience, or embed it on your website, depending on where respondents are most likely to engage. Responses are collected and organized automatically, making it easier to focus on what matters most: analyzing the results and drawing useful insights.